Ariadne

Created an App for Incident Response Investigation.

Introduction

Incident response has a different kind of pressure to it. The alerts come in fast, rarely clean, and almost never in isolation. One signal turns into five. Five turns into fifty. Logs, endpoint alerts, memory artifacts, SIEM queries all serve as pieces of a puzzle. And you don’t have the box image. You start pulling threads. Maybe it’s nothing. Maybe it’s everything. Either way, the clock is running. So you pivot between tools, cross-reference outputs, take notes, build timelines in your head, and try to answer the only question that really matters: What actually happened here?

The Problem

That is where the work actually begins. The problem is not a lack of visibility. It is fragmentation. Chainsaw output in one place. EVTX logs in another. EDR alerts in a dashboard. SIEM queries somewhere else entirely. Each tool surfaces signal, but none of them assemble the story. So the investigation lives in your head. You correlate events manually, track hypotheses mentally, and rebuild timelines every time you revisit the incident. Context does not persist. It decays. And when context decays, so does confidence. Ariadne came out of that gap: treating every artifact as part of a single investigative thread. Something that can ingest disparate evidence, maintain context over time, and help reconstruct what actually happened without starting over every single time you sit back down.

The Stack

- Storage

- SQLite - Server-side persistence

- LLM Model - Context correlation, artifact review, and investigative summaries

- Backend

- SQLAlchemy - Persistence through ORM and modeling

- FastAPI - Framework for API infrastructure

- Frontend

- React - UI component library

- Vite - Development server and build tool

My Role

I followed the same model as my previous projects. I architected the application end-to-end and handled all engineering decisions. That meant designing the context window management strategy, deciding what gets persisted and how it feeds back into prompts, and mapping the ingestion pipeline across artifact formats. Claude generated the code from the architecture I defined. I drove generation through precise prompt engineering, one feature at a time, and reviewed everything as a repository maintainer before it landed.

Current Status

MVP: In Progress. The engine runs. The analysis takes shape. Now we find out if it holds under real pressure.

The Process

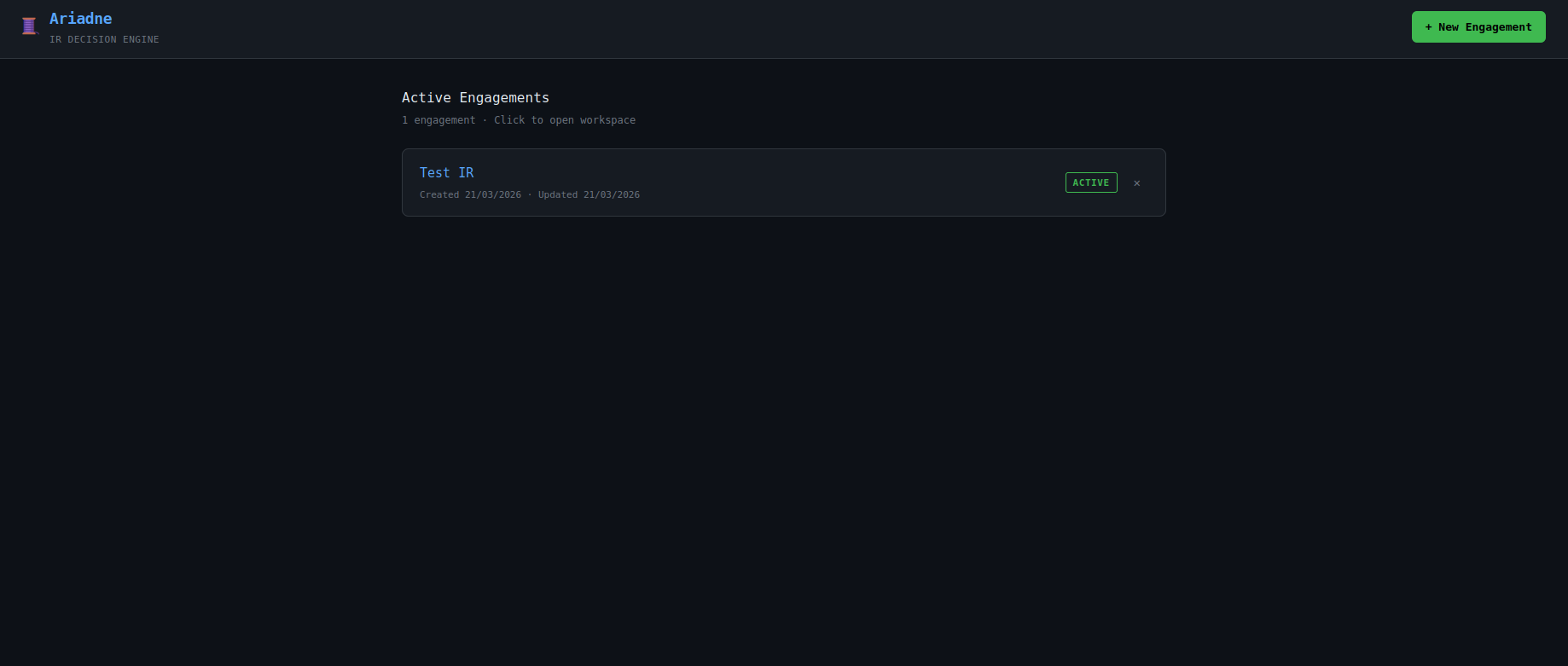

The Landing Page

The landing page acts as the investigation command center. Active incidents, open cases, and current workload are surfaced in a single view so priorities can be assessed quickly and work can be triaged without losing context.

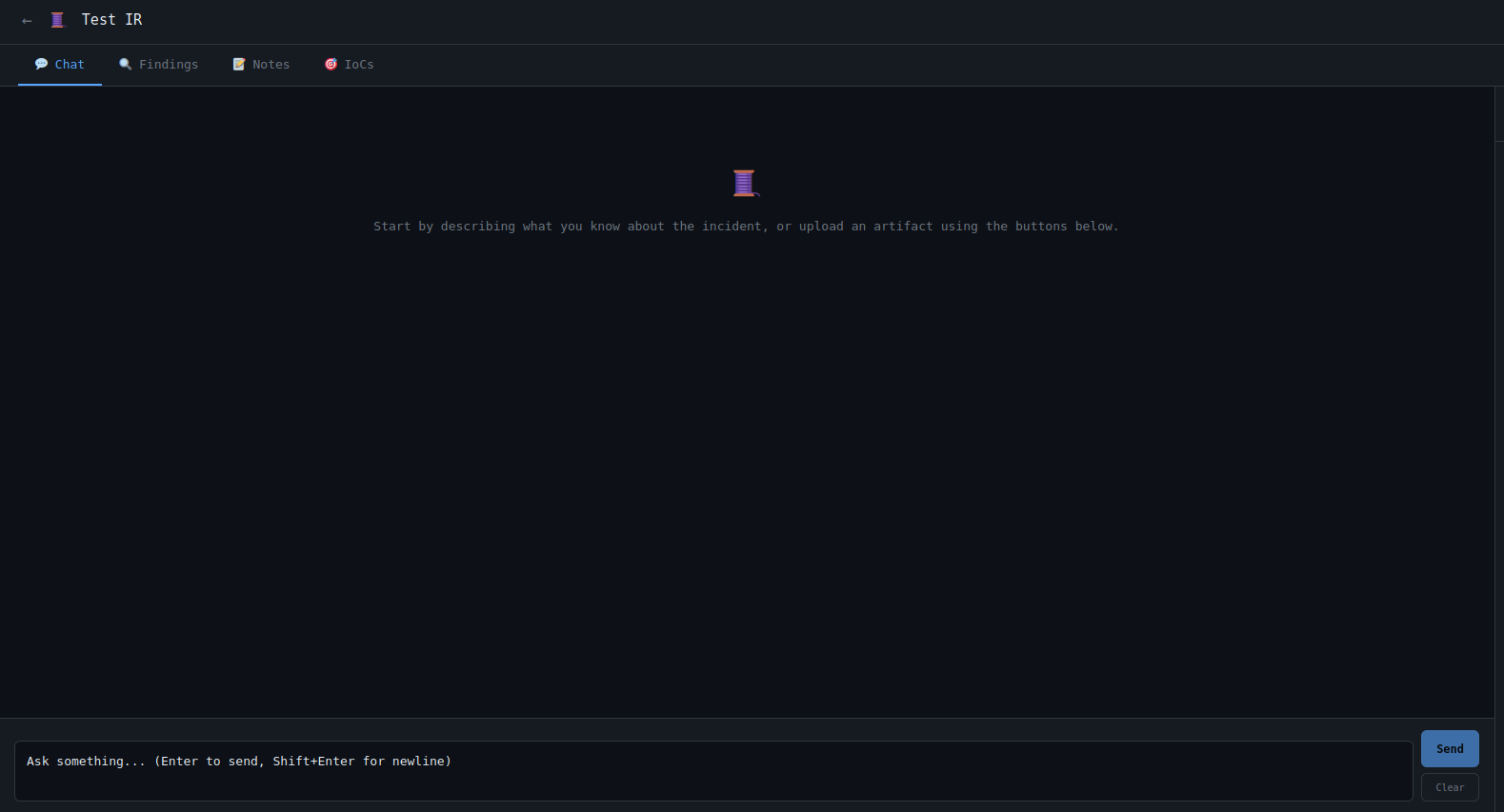

Individual Investigation

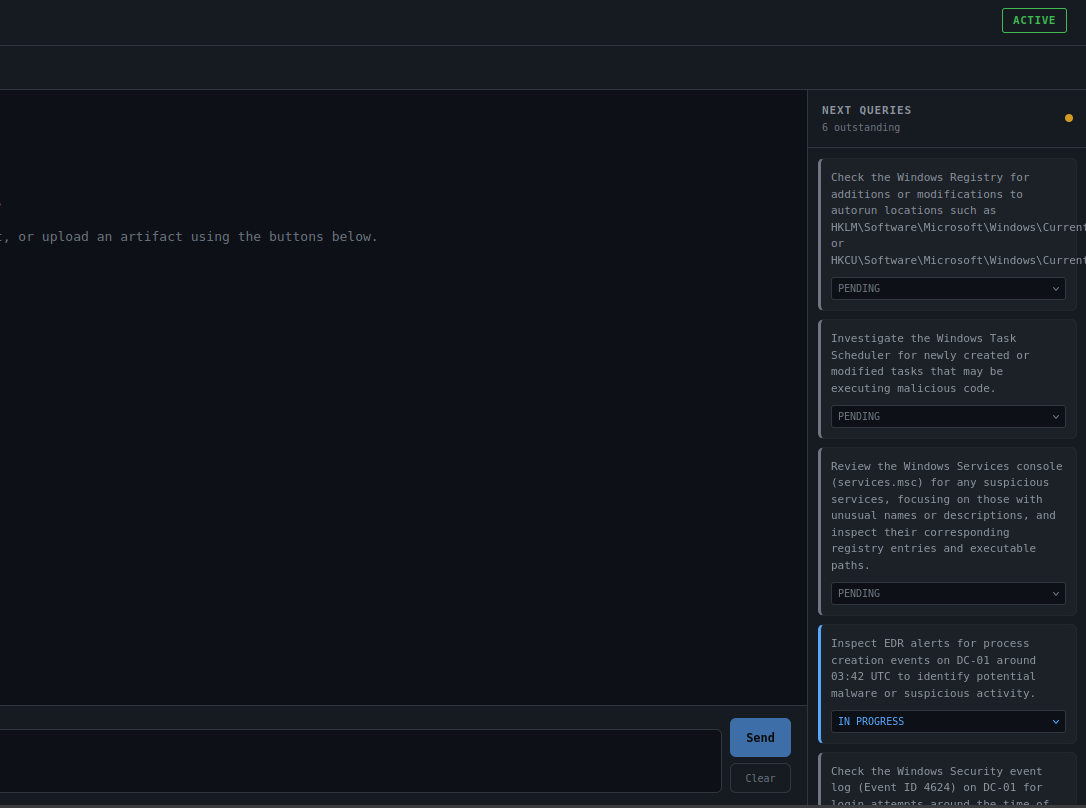

Each investigation has a dedicated workspace where evidence, notes, tracked indicators, and prior outputs remain attached to the case. The embedded chat interface allows analysts to query the investigation directly, refine hypotheses, and request targeted analysis.

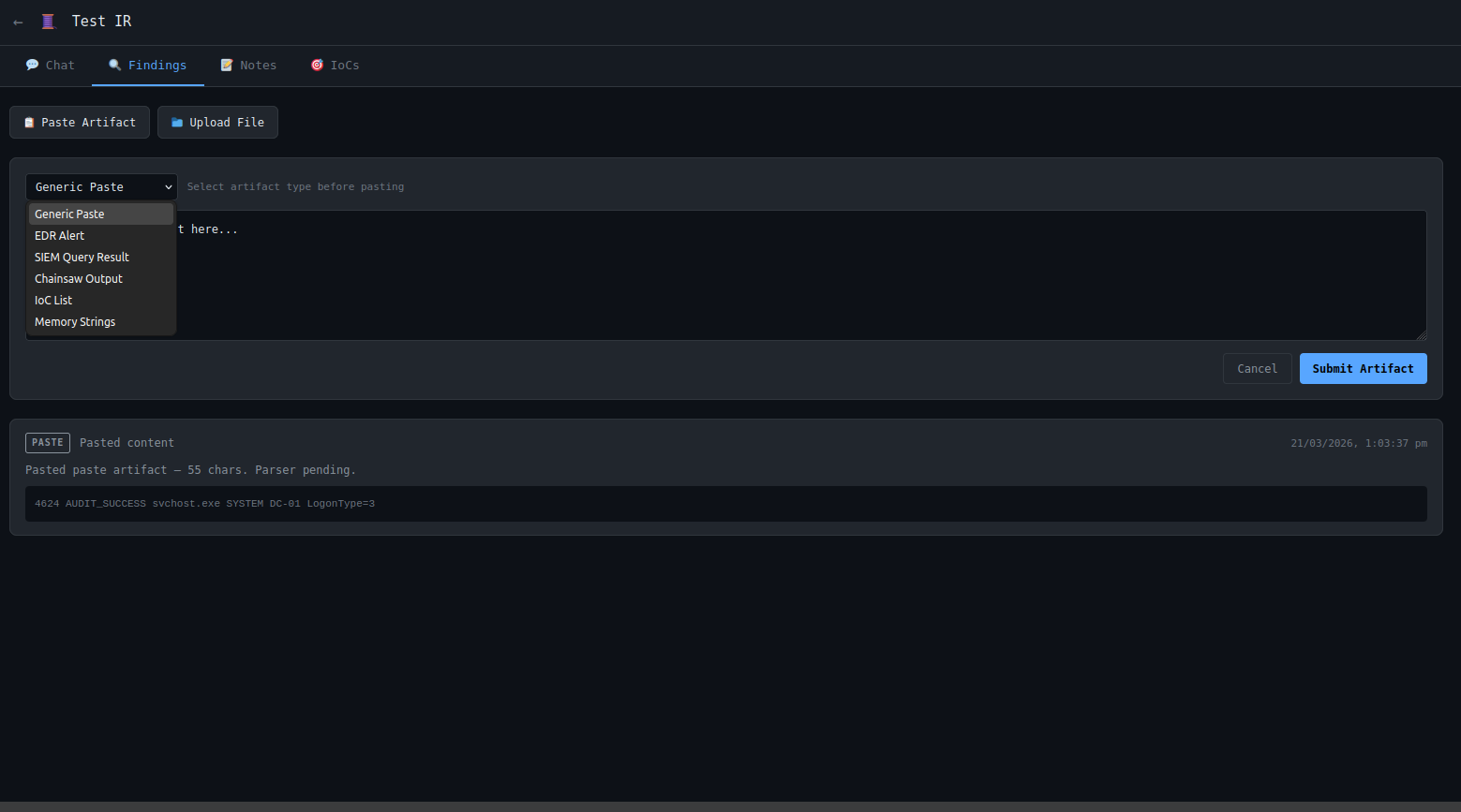

Artifact Ingestion

The ingestion interface accepts pasted artifacts from common incident response workflows. Logs, alerts, extracted strings, query results, and other evidence can be submitted directly into the case where they are normalized and folded into the broader investigation context.

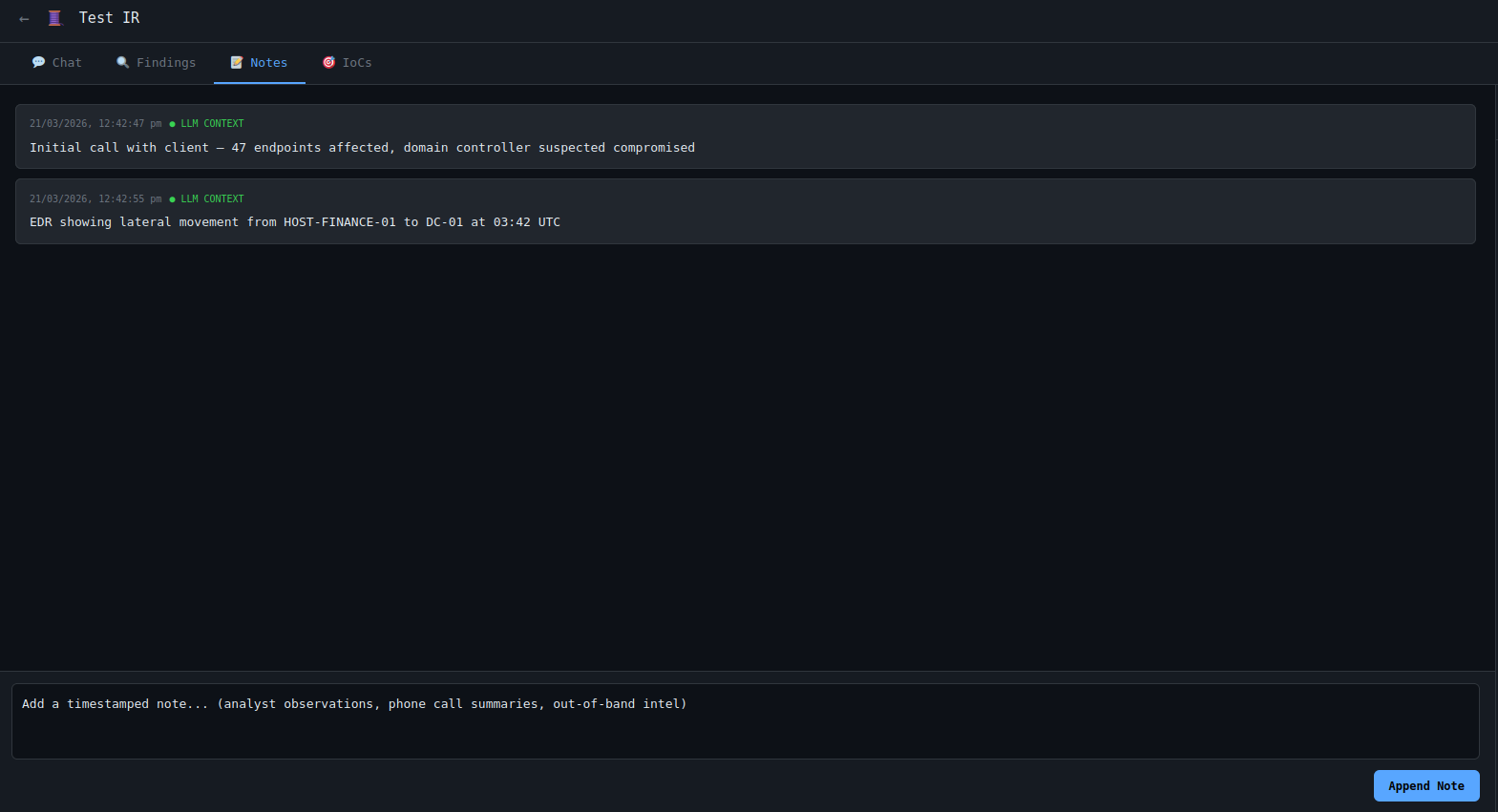

Notes & Analyst Context

Not every useful signal comes from a parser. Freeform notes allow analysts to preserve reasoning, observations, side-channel findings, and contextual details that would otherwise remain outside the system.

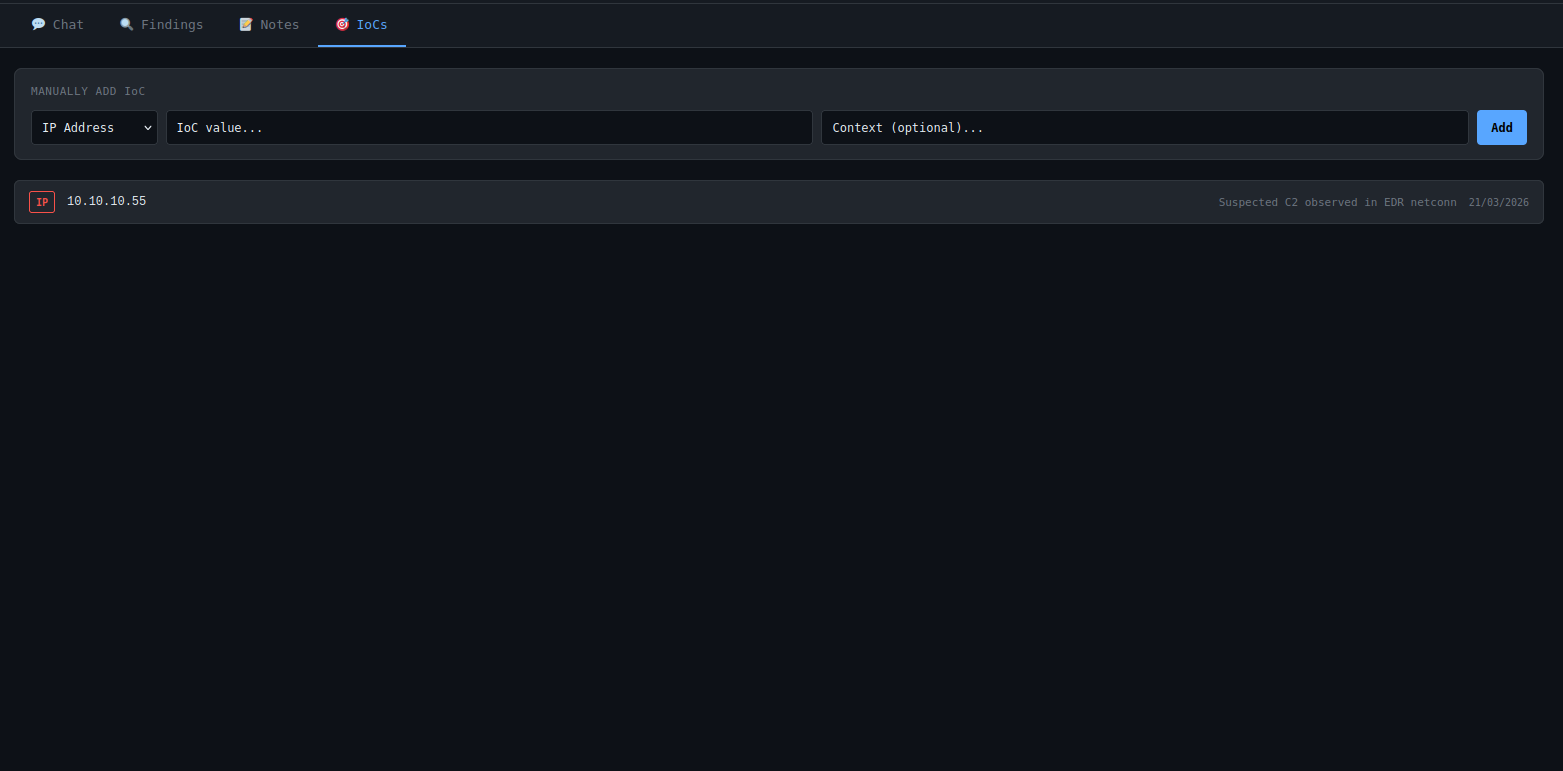

IOC Tracking

Indicators of compromise can be tracked throughout the investigation lifecycle to ensure they remain visible, actionable, and ready for downstream use in blocking, hunting, or detection engineering workflows.

Investigation Continuity

Prior findings, generated tasks, hypotheses, and completed actions are persisted back into the case context. This reduces repetitive outputs, preserves momentum between sessions, and allows the investigation to progress cumulatively instead of restarting each time.

The Code

Backend

The database layer initializes SQLite, enables WAL mode for concurrent reads, enforces foreign key integrity, and provisions the full schema on startup. This keeps deployment lightweight while preserving relational structure across incidents, artifacts, notes, IoCs, and generated outputs.

<snip>

conn = sqlite3.connect(DB_PATH)

conn.row_factory = sqlite3.Row

conn.execute("PRAGMA journal_mode=WAL;")

conn.execute("PRAGMA foreign_keys=ON;")

return conn

def init_db() -> None:

conn = get_connection()

try:

cursor = conn.cursor()

cursor.execute("""

CREATE TABLE IF NOT EXISTS engagements (

id INTEGER PRIMARY KEY AUTOINCREMENT,

name TEXT NOT NULL,

description TEXT,

status TEXT NOT NULL DEFAULT 'active',

lead_id TEXT,

created_at TEXT NOT NULL DEFAULT (datetime('now')),

updated_at TEXT NOT NULL DEFAULT (datetime('now'))

);

""")

<snip>

The service layer centralizes CRUD operations and case lifecycle management. Raw SQL was used intentionally for transparency, auditability, and predictable query behavior within a security-focused application.

<snip>

def create_engagement(

self,

name: str,

description: Optional[str] = None,

status: str = "active",

lead_id: Optional[str] = None

) -> dict:

conn = get_connection()

try:

cursor = conn.cursor()

cursor.execute("""

INSERT INTO engagements (name, description, status, lead_id)

VALUES (?, ?, ?, ?)

""", (name, description, status, lead_id))

conn.commit()

# Fetch and return the newly created row

# cursor.lastrowid gives us the auto-incremented ID

row = cursor.execute(

"SELECT * FROM engagements WHERE id = ?",

(cursor.lastrowid,)

).fetchone()

return self._row_to_dict(row)

finally:

conn.close()

<snip>

The LLM integration layer handles model access, token budgeting, and prompt execution. Separate model tiers were used for deeper investigative reasoning versus low-latency extraction tasks.

<snip>

import os

from groq import Groq

from dotenv import load_dotenv

from typing import Optional, Generator

load_dotenv()

MODEL_PRIMARY = "llama-3.3-70b-versatile"

MODEL_FAST = "llama-3.1-8b-instant"

DEFAULT_MODEL = MODEL_PRIMARY

MODEL_CONTEXT_LIMITS = {

MODEL_PRIMARY: 28000,

MODEL_FAST: 14000,

}

<snip>

The parser layer normalizes heterogeneous evidence sources into structured records, timeline events, and extracted indicators. This allows disparate artifacts to be folded into a consistent investigation context.

<snip>

import json

from typing import Optional

from parsers.ioc_parser import extract_iocs

def parse_chainsaw(raw_content: str) -> dict:

try:

data = json.loads(raw_content)

except json.JSONDecodeError as e:

return _error_result(f"Invalid JSON: {e}")

if not isinstance(data, list):

if isinstance(data, dict) and 'hits' in data:

data = data['hits']

else:

return _error_result("Expected JSON array of Chainsaw records")

records = []

timeline_events = []

all_iocs = []

for item in data:

try:

record = _parse_record(item)

if record:

records.append(record)

if record.get('timestamp'):

timeline_events.append({

'event_time': record['timestamp'],

'event_type': _map_event_type(record.get('event_id')),

'description': record.get('detection_name') or f"Event ID {record.get('event_id', 'unknown')}",

'host': record.get('computer'),

'actor': record.get('subject_user') or record.get('target_user'),

'process': record.get('process_name'),

})

iocs = extract_iocs(json.dumps(item), context=f"Chainsaw event ID {record.get('event_id')}")

all_iocs.extend(iocs)

except Exception:

continue

<snip>

FastAPI exposes the operational API surface for investigations, notes, artifacts, timelines, tracked indicators, and AI-assisted analysis workflows.

<snip>

from fastapi import APIRouter, HTTPException

from db.database_service import DatabaseService

from models.schemas import (

EngagementCreate,

EngagementUpdate,

EngagementResponse,

)

router = APIRouter(

prefix="/engagements",

tags=["engagements"], # Groups these endpoints in /docs

)

db = DatabaseService()

@router.get("", response_model=list[EngagementResponse])

async def list_engagements():

return db.list_engagements()

<snip>

The application bootstrap layer initializes persistence, configures middleware, registers route groups, and exposes the full backend service.

<snip>

app = FastAPI(

title="Ariadne IR Decision Engine",

description="LLM-assisted Incident Response platform",

version="0.1.0",

lifespan=lifespan,

)

app.add_middleware(

CORSMiddleware,

allow_origins=[

"http://localhost:5173", # Vite dev server

"http://localhost:3000", # fallback

],

allow_credentials=True,

allow_methods=["*"],

allow_headers=["*"],

)

app.include_router(artifacts_router, prefix="/api")

app.include_router(chat_router, prefix="/api")

app.include_router(engagements_router, prefix="/api")

app.include_router(iocs_router, prefix="/api")

app.include_router(notes_router, prefix="/api")

app.include_router(suggestions_router, prefix="/api")

app.include_router(timeline_router, prefix="/api")

app.include_router(playbook_router, prefix="/api")

<snip>

Frontend

A shared API client provides consistent headers, timeout controls, and interceptor-based error handling for all frontend requests.

import axios from 'axios'

const api = axios.create({

baseURL: '',

headers: { 'Content-Type': 'application/json' },

timeout: 30000,

})

// Response interceptor — log errors in development

api.interceptors.response.use(

response => response,

error => {

console.error('[api]', error.response?.status, error.response?.data)

return Promise.reject(error)

}

)

export default api

The application centers around two primary workflows: the incident queue and the individual case workspace. The queue supports triage and prioritization, while the workspace consolidates investigation activity into a single operating view.

<snip>

import { useState, useEffect, useRef } from 'react'

import { useParams, useNavigate } from 'react-router-dom'

import { useQuery, useMutation, useQueryClient } from '@tanstack/react-query'

import api from '../api/client'

const STATUS_CONFIG = {

active: { color: 'var(--status-active)', label: 'ACTIVE' },

contained: { color: 'var(--status-contained)', label: 'CONTAINED' },

closed: { color: 'var(--status-closed)', label: 'CLOSED' },

archived: { color: 'var(--status-archived)', label: 'ARCHIVED' },

}

const SUGGESTION_STATUS_COLORS = {

pending: 'var(--text-muted)',

in_progress: 'var(--accent-blue)',

tried: 'var(--accent-orange)',

worked: 'var(--accent-green)',

failed: 'var(--accent-red)',

dismissed: 'var(--text-muted)',

}

function ChatTab({ engagementId }) {

const [input, setInput] = useState('')

const [streaming, setStreaming] = useState(false)

const [streamingContent, setStreamingContent] = useState('')

const messagesEndRef = useRef(null)

const queryClient = useQueryClient()

const { data: messages = [] } = useQuery({

queryKey: ['messages', engagementId],

queryFn: async () => (await api.get(`/api/engagements/${engagementId}/messages`)).data,

})

// Scroll to bottom when messages change

useEffect(() => {

messagesEndRef.current?.scrollIntoView({ behavior: 'smooth' })

}, [messages, streamingContent])

<snip>

Routing separates queue management from deep investigative workspaces while preserving a lightweight single-page application model. Case status indicators were color-coded to improve at-a-glance workload scanning across active investigations.

import { BrowserRouter, Routes, Route } from 'react-router-dom'

import { QueryClient, QueryClientProvider } from '@tanstack/react-query'

import EngagementList from './pages/EngagementList'

import EngagementWorkspace from './pages/EngagementWorkspace'

const queryClient = new QueryClient({

defaultOptions: {

queries: {

retry: 1,

staleTime: 30000,

}

}

})

export default function App() {

return (

<QueryClientProvider client={queryClient}>

<BrowserRouter>

<Routes>

<Route path="/" element={<EngagementList />} />

<Route path="/engagement/:id" element={<EngagementWorkspace />} />

</Routes>

</BrowserRouter>

</QueryClientProvider>

)

}

The frontend entrypoint mounts the React application and loads global styling for the interface.

import { StrictMode } from 'react'

import { createRoot } from 'react-dom/client'

import './index.css'

import App from './App.jsx'

createRoot(document.getElementById('root')).render(

<StrictMode>

<App />

</StrictMode>,

)

And now we find out where all the loose threads take us.